EngagedLab: from static course content to an interactive lab workflow

This internal EdTechLab case study documents the current EngagedLab workflow: source material in, structured interactive lab out, with preview, export, and Blackboard-ready handoff visible in the product flow already.

Current evidence

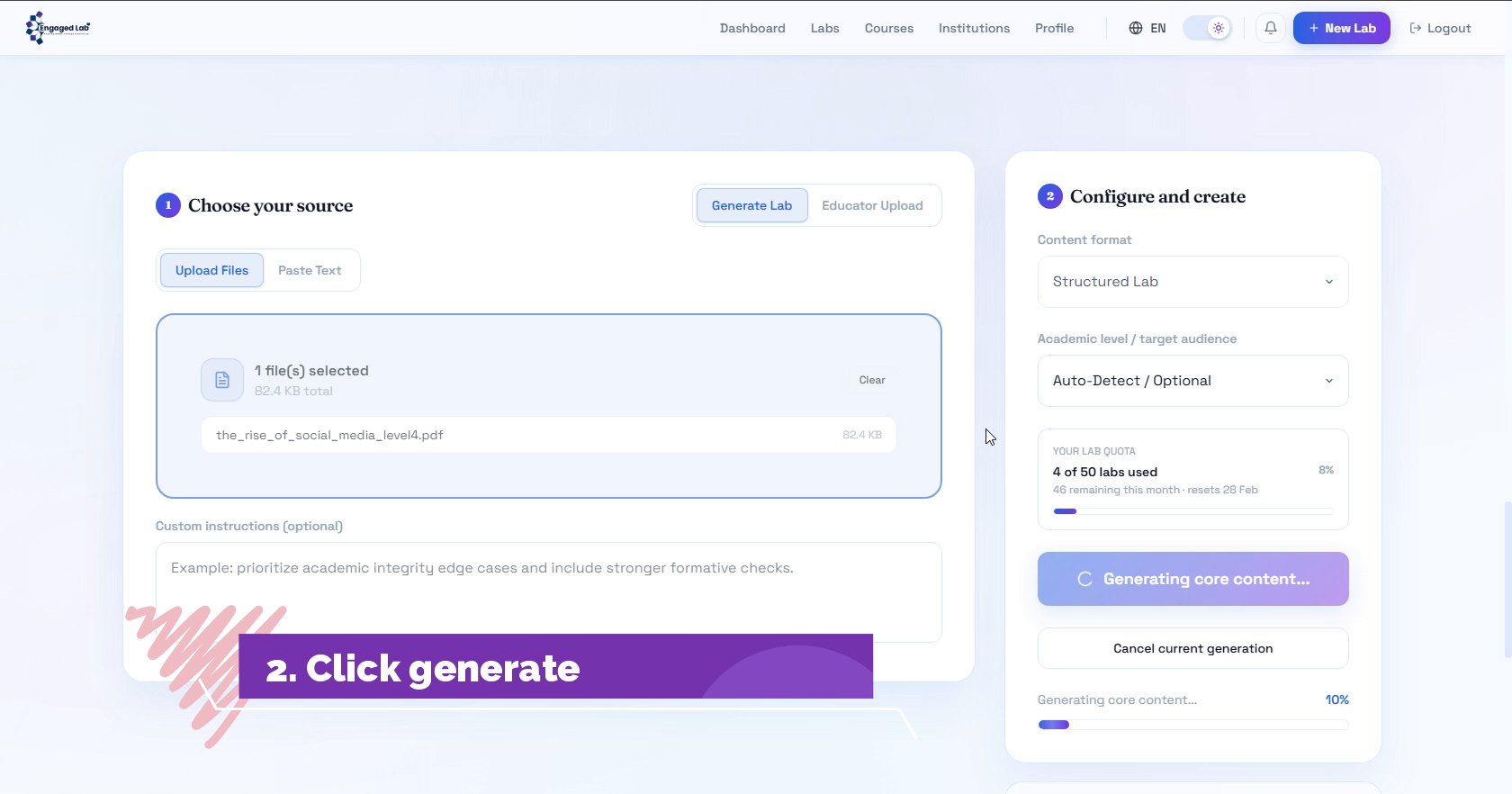

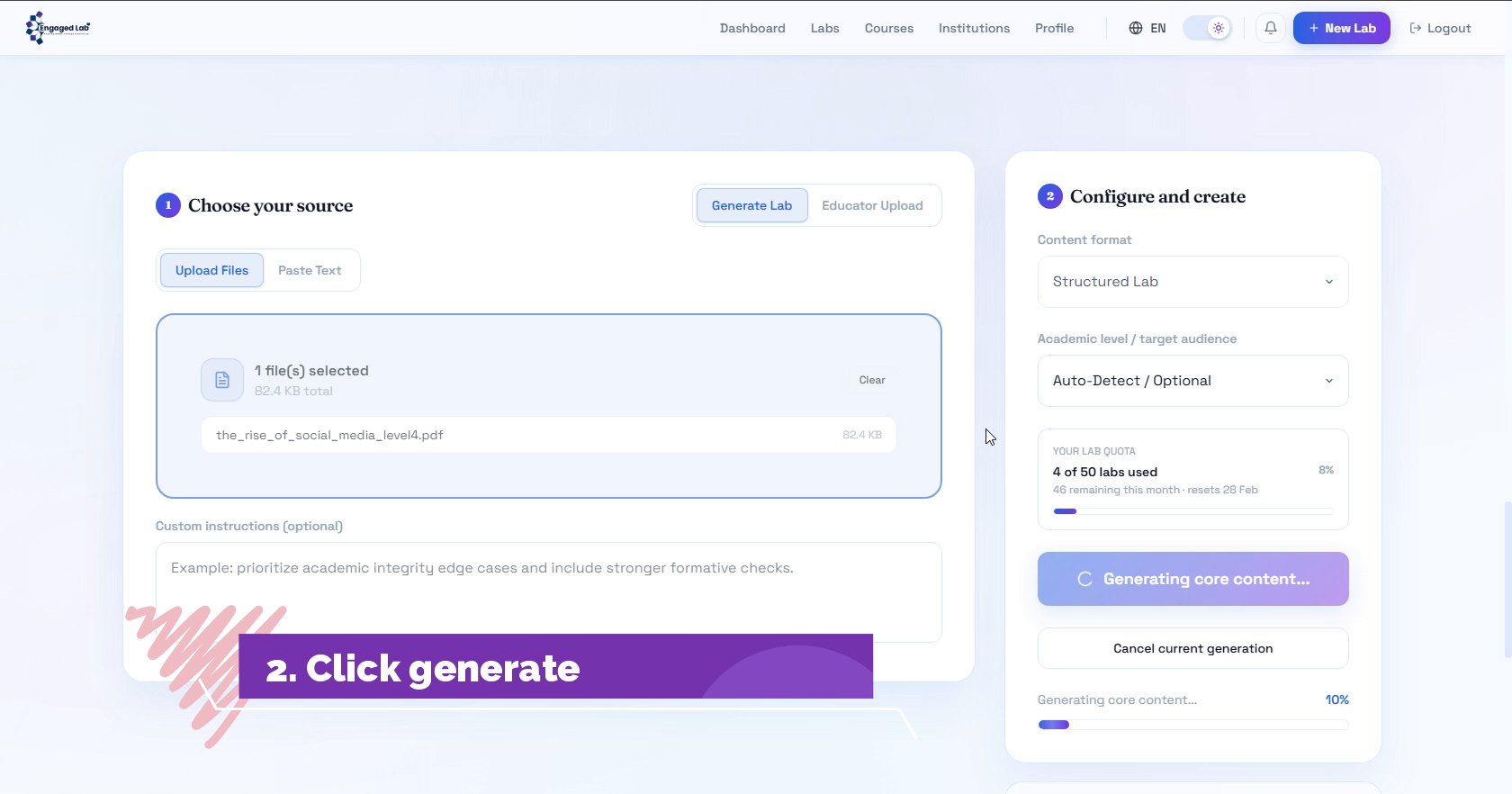

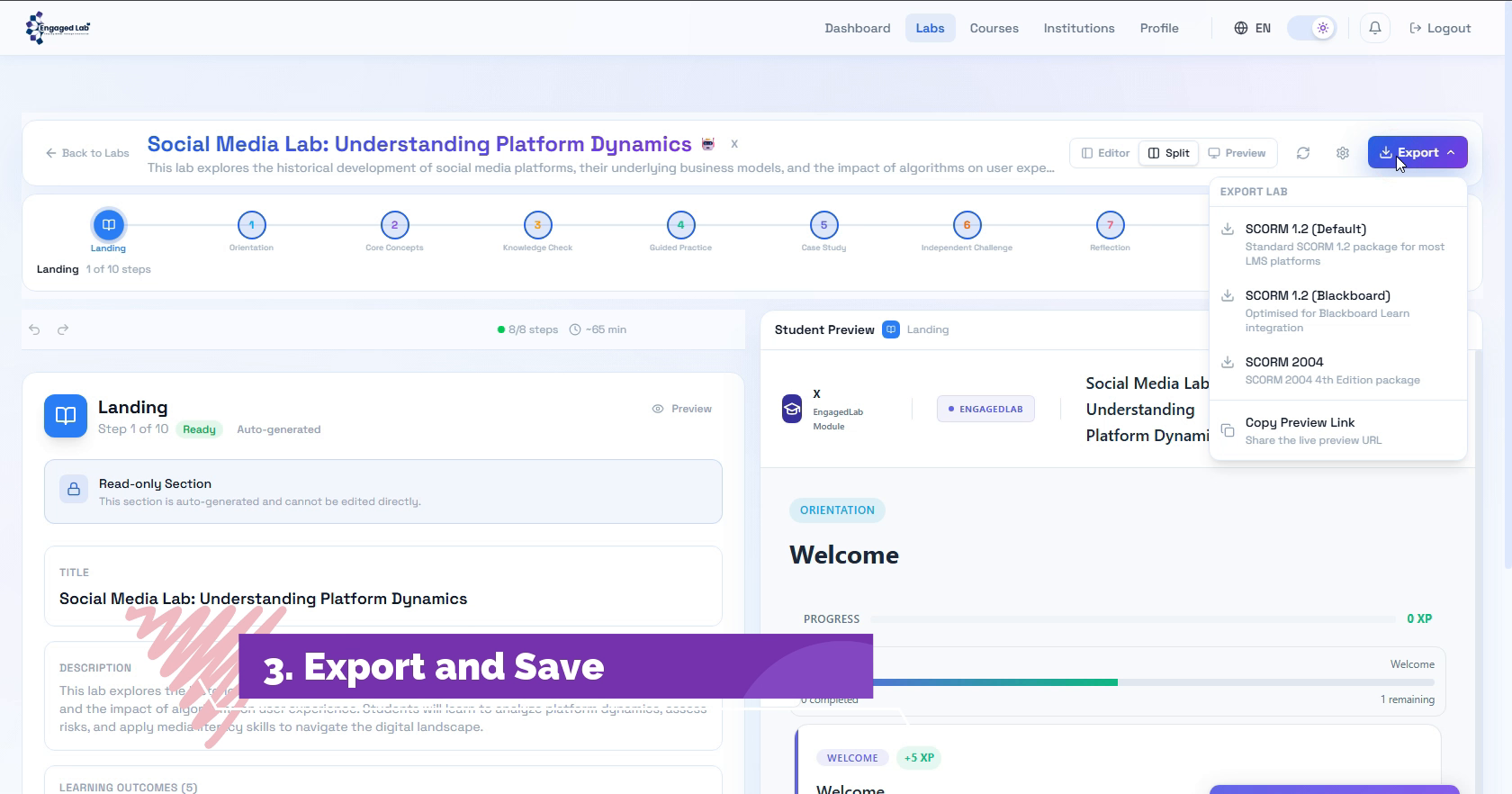

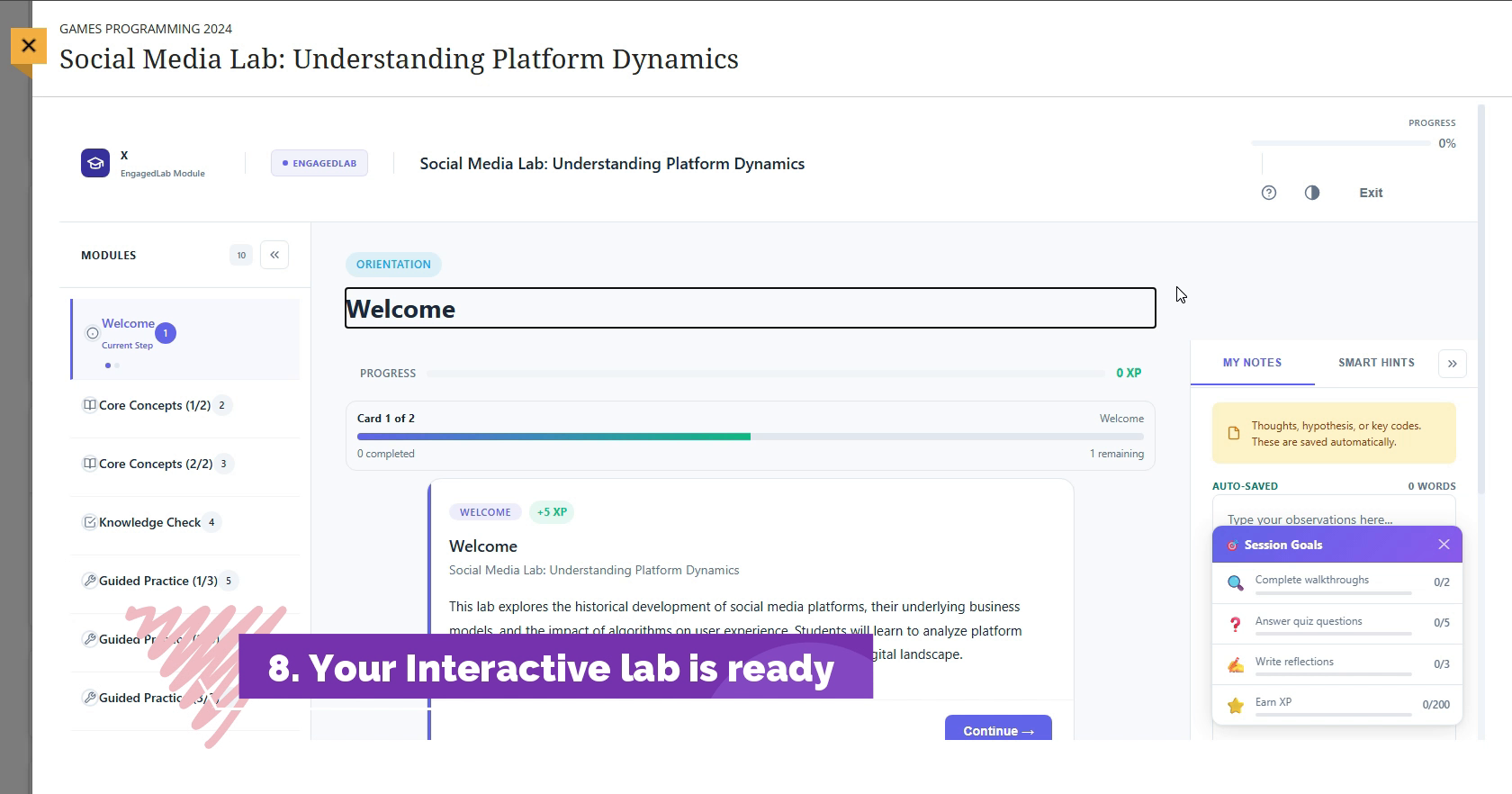

The current demonstration clip shows an eight-step create-to-preview/export path in about 30 seconds, including source upload, lab generation, student preview, SCORM export, and Blackboard-oriented packaging steps.

In brief

- EngagedLab is designed to convert passive source material into sequenced interactive labs.

- Current product proof includes a live walkthrough, student preview, and SCORM export flow.

- The product direction is informed by active-learning evidence and analytics-governance standards.

- This page documents present workflow evidence honestly rather than claiming institutional outcomes that do not yet exist.

Why this problem matters

Course teams often begin with PDFs, slides, and reading packs because they are easy to circulate. Those formats distribute information, but they do not automatically create a participation-rich learning experience. High-impact active-learning reviews from Prince, Freeman and colleagues, and Theobald and colleagues all point in the same direction: more structured active learning can improve performance and narrow achievement gaps, especially in higher-education STEM settings.

EngagedLab is built around the practical implication of that literature. The software is not claiming to create better teaching on its own. The claim is narrower and more defensible: a product should make it easier for an educator or programme team to move from passive content into structured, interactive learning steps without rebuilding the whole experience manually.

What the current workflow does

The current product flow begins with source selection, then moves through generation, configuration, and preview before exposing export routes that fit established LMS practice. In the current walkthrough, the output is a structured lab with numbered stages, a student preview, and export options that include SCORM 1.2, SCORM 2004, and a Blackboard-oriented setup path.

That matters because institutional teams rarely need only a prettier activity builder. They need an authoring flow that ends with a credible deployment path. If an interactive product cannot move cleanly into existing course operations, it remains a demo instead of an adoptable tool.

Design choices behind the build

Three design choices are visible already. First, the workflow is organised around sequenced lab steps rather than a flat page builder. Second, the preview and export layers sit inside the core product flow instead of being left to a later admin pass. Third, the product is being framed as analytics-aware infrastructure rather than visual presentation alone.

That third point is important. Jisc and 1EdTech both argue, in different ways, that learning activity data is only useful when the event model, operational purpose, and stewardship approach are considered early. EdTechLab is applying that logic here: the interface should not be designed in isolation from the evidence model the product will later need to support.

What is visible today

This is intentionally a lab case study, not a client deployment story. The honest proof today is product proof:

- The EngagedLab public product is live.

- The current walkthrough shows create, preview, export, and Blackboard handoff in about 30 seconds.

- The build already exposes structured steps, student preview, and LMS-ready export options.

What is not claimed here is just as important. There are no adoption metrics, no named institutional deployment outcomes, and no implied implementation scale. This page exists to show the workflow evidence that does exist and the reasoning behind the design.

Why institutions may care

For university digital teams, the value is operational fit: a clearer route from product workflow to LMS delivery. For research leads, the value is evidence quality: more structured interaction makes it easier to think about later analytics and evaluation. For innovation teams, the value is speed: a product can be inspected, tested, and challenged before any institution commits to a bigger decision.

Current scope and limits

This case study documents what is live today, what the current walkthrough proves, and where the next evidence bar sits: pilot use, course-team feedback, and live analytics outputs from educational settings.

Workflow snapshots

Research and standards frame

- Prince M. Does active learning work? A review of the research. DOI

- Freeman S, Eddy SL, McDonough M, et al. Active learning increases student performance in science, engineering, and mathematics. DOI

- Theobald EJ, Hill MJ, Tran E, et al. Active learning narrows achievement gaps for underrepresented students in undergraduate science, technology, engineering, and math. DOI

- Jisc. Code of Practice for Learning Analytics. Source

- 1EdTech. Six Steps for Effective Learning Analytics Implementation in Postsecondary Education. Source

Continue the review

Want to inspect the workflow, the product page, or the wider research frame?

The current product view, walkthrough, and Lab Notes article together show how EdTechLab is approaching interactive learning workflows, analytics readiness, and institutional delivery constraints.